I am a Research Engineer at Meta Reality Labs, working on GPU systems, real-time rendering infrastructure, and performance optimization for photorealistic avatars in VR.

My work focuses on C++/Vulkan rendering backends, GPU profiling and benchmarking, shader and render-graph optimization, developer tooling, and Python/C++ integration for neural rendering workflows. I am also interested in ML inference infrastructure, GPU runtime systems, and low-level performance engineering.

I earned my master’s degree in Computer Vision from Carnegie Mellon University. Previously, I completed my bachelor’s degree in Computer Science and Technology at Zhejiang University, where I was advised by Prof. Hongzhi Wu.

My interests include GPU systems, real-time rendering, computer graphics, 3D vision, neural rendering, and ML infrastructure.

📖 Education

Carnegie Mellon University, Pittsburgh, U.S. Sep 2023 - Dec 2024

- Program: Master of Science in Computer Vision

- Cumulative QPA: 4.0/4.0

Zhejiang University, Hangzhou, China Sep 2019 - Jun 2023

- Degree: Bachelor of Engineering

- Honors degree from Chu Kochen Honors College

- Major: Computer Science and Technology

- Overall GPA: 94.6/100 3.98/4

- Ranking: 1/125

💻 Experience

Meta Reality Labs, Pittsburgh, U.S. Jan 2025 - Present

- Position: Research Engineer

- Working on GPU systems, real-time rendering infrastructure, and performance optimization for photorealistic avatars in VR.

- Optimized a Vulkan-based rendering backend with depth culling, tiled shading, render graph refactoring, async compute queues, and shader-level optimization.

- Built Python/C++ developer tooling for prototyping, profiling, benchmarking, and performance analysis of neural rendering workflows.

- Implemented CUDA-Vulkan interoperability for PyTorch CUDA tensors using external memory/semaphore mechanisms to reduce unnecessary CPU-GPU copies.

ByteDance / TikTok, San Jose, U.S. May 2024 - Aug 2024

- Position: AR Effect Engineer Intern

- Worked on optimization and feature development for Effect House's Visual Effects system, focusing on GPU particle memory optimization, graph-based VFX authoring, and neural 3D scene rendering features.

Microsoft Research Asia, Beijing, China Mar 2022 - Jun 2023

- Position: Research Intern of Internet Graphics group

- Advisor: Dr. Yizhong Zhang, Dr. Yang Liu

- Worked on 3D reconstruction, depth fusion, and real-time AR/SLAM systems, combining computer vision algorithms, CUDA acceleration, C++ systems engineering, and iOS AR visualization.

📝 Projects

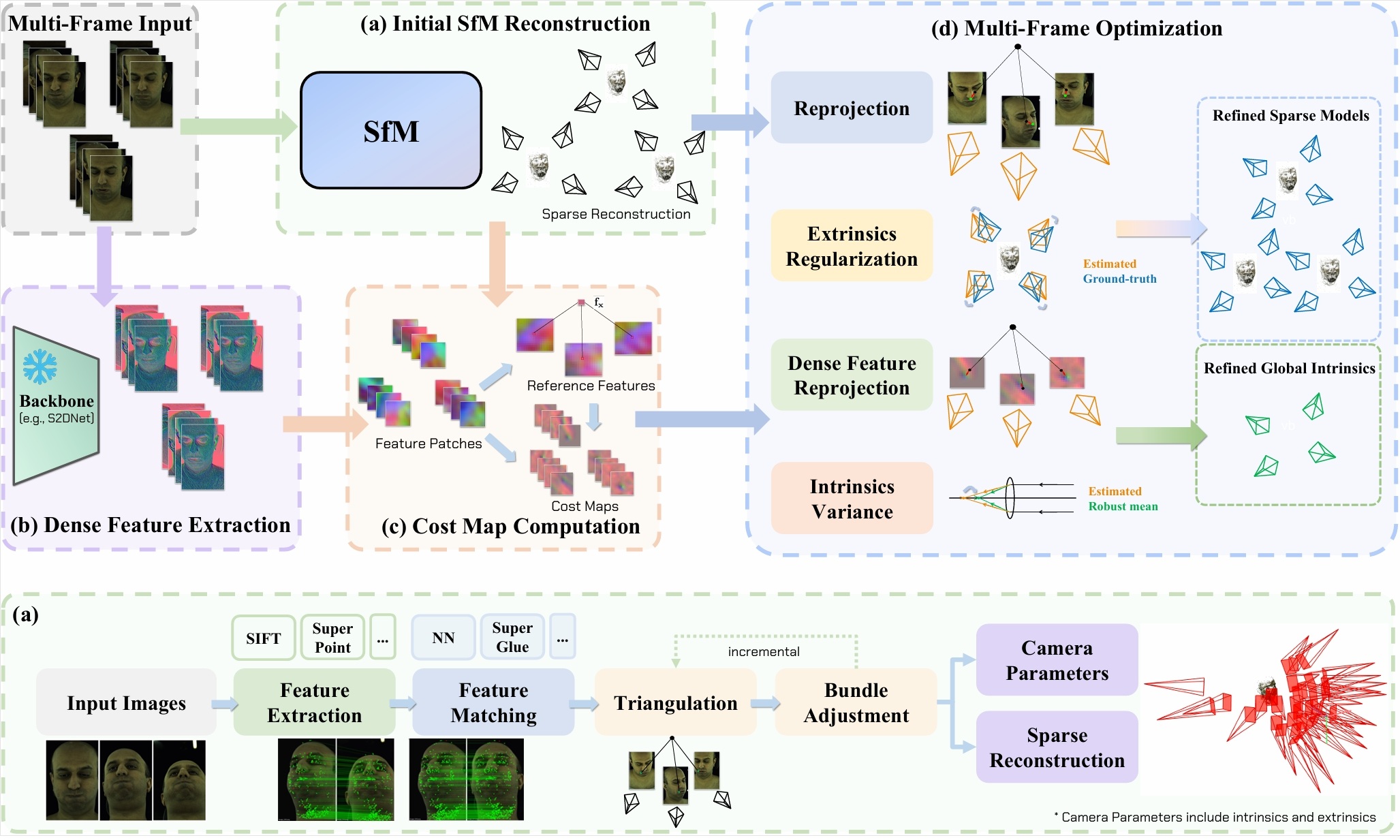

Multi-Cali Anything [Project Page] [Paper] [Code] Sep 2024 - Dec 2024

Research Project at CMU; Accepted at IROS 2025

A dense-feature-driven camera calibration system for large-scale camera arrays that refines camera parameters directly from scene data, reducing the need for dedicated checkerboard captures.

- Developed a CUDA/C++ accelerated calibration system that integrates dense feature refinement into existing SfM pipelines.

- Implemented custom CUDA kernels for keypoint feature extraction, reference feature computation, and dense cost-map generation used in feature-metric optimization.

- Designed regularization terms for extrinsics alignment, dense feature reprojection loss, and multi-frame intrinsics consistency.

- Evaluated on the Multiface dataset, achieving calibration quality comparable to dedicated calibration while improving downstream reconstruction quality.

Effect House Visual Effects System May 2024 - Aug 2024

Internship Project at ByteDance

A ByteDance internship project focused on optimizing and extending the Effect House VFX system.

- Optimized GPU particle attribute buffer allocation and data layout, reducing memory usage by more than 50% for most VFX templates.

- Designed a simulation node in the VFX graph editor, allowing users to build customizable physics simulation workflows beyond standard particle effects.

- Implemented a 3D Gaussian Splatting output node to render neural 3D scenes through the VFX particle pipeline.

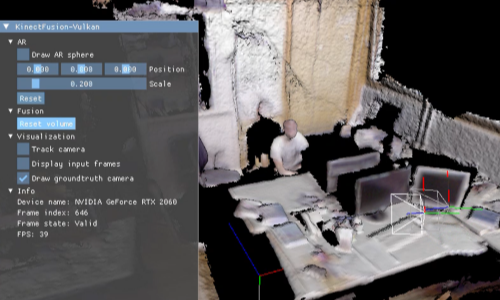

KinectFusion-Vulkan [Project Page] Mar 2024 - Apr 2024

Course Project of Robot Localization and Mapping (16-833)

A Vulkan-based implementation of KinectFusion that integrates camera tracking, scene reconstruction, GPU-accelerated fusion, and real-time graphics rendering.

- Implemented a real-time 3D reconstruction system in C++/Vulkan, integrating GPU-accelerated fusion and visualization.

- Used Vulkan compute and graphics pipelines to support real-time reconstruction and AR rendering on RGB-D sequences.

- Rendered AR effects using estimated camera poses from the reconstruction pipeline.

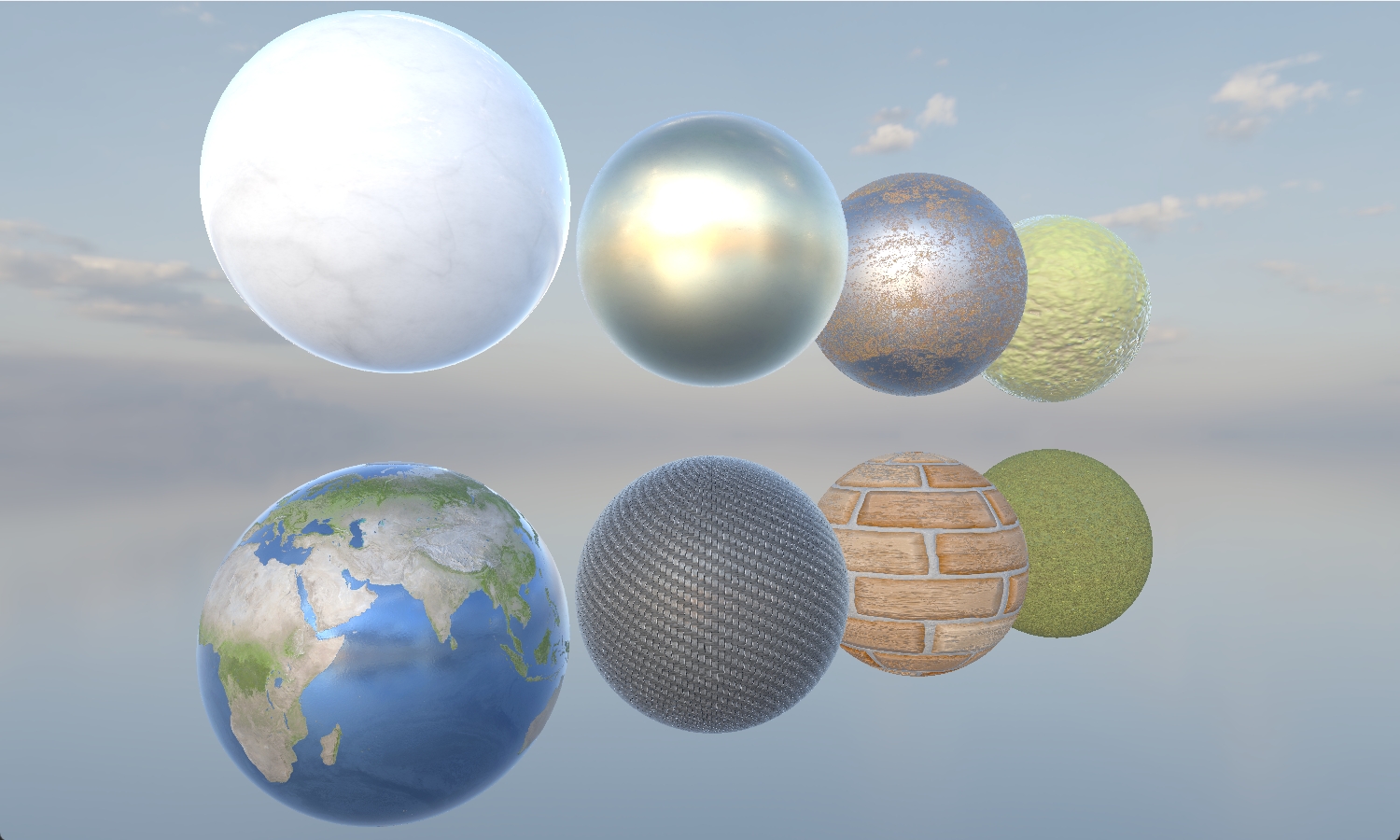

Render72: Real-Time Vulkan Renderer [Project Page] Jan 2024 - Apr 2024

Course Project of Real-Time Graphics (15-472)

A real-time Vulkan renderer with a modular graphics backend for scene loading, animation, multi-pass rendering, and high-quality shading.

- Implemented deferred shading, shadow mapping, PBR/IBL, parallax mapping, SSAO, and multiple material types.

- Built rendering passes with attention to GPU memory usage, synchronization, and frame-time stability.

- Supported scene loading, animation playback, environment lighting, analytical lights, and screen-space effects.

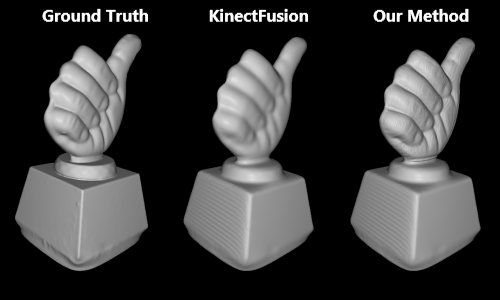

Anti-Blur Depth Fusion Based on Vision Cone Model Nov 2022 - Jun 2023

Research Project at Microsoft Research Asia

A CUDA-accelerated depth fusion method designed to preserve high-frequency geometric details when fusing low-resolution depth images.

- Designed a vision-cone-based loss formulation that models each observed depth pixel as the average depth within its pixel cone.

- Implemented CUDA kernels to accelerate performance-critical loss computation and enable real-time reconstruction visualization.

- Evaluated the method on SDF voxel and mesh representations, improving reconstruction detail over baseline fusion methods.

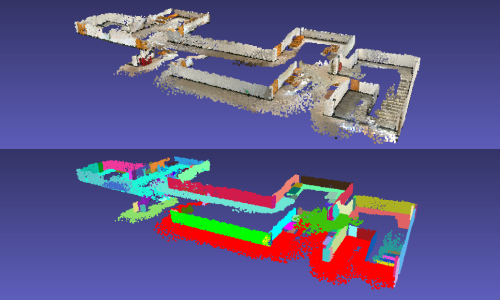

Real-Time SLAM System Based on ARKit Framework Mar 2022 - Oct 2022

Research Project at Microsoft Research Asia

A real-time iOS AR scanning and reconstruction system using ARKit, a C++ SLAM/reconstruction backend, Objective-C bridging, SwiftUI UI, and Metal visualization.

- Integrated a C++ SLAM/reconstruction backend into an iOS ARKit app through an Objective-C bridge, SwiftUI interface, and Metal-based real-time visualization.

- Improved localization robustness using plane constraints from indoor scenes with rich planar structures.

- Implemented loop closure with vocabulary-tree retrieval and confusion-matrix filtering, with UI-based confirmation for detected loops.

C Compiler [Project Page] Apr 2022 - Jun 2022

Course Project of Compiler Principle

An LLVM-based C-like language compiler built with C++, Flex/Bison, and LLVM.

- Implemented compiler components including grammar design, AST representation, code generation, test cases, and documentation.

- Used Flex/Bison for lexical analysis and parsing, and LLVM-14 C++ API for LLVM IR and object-code generation.

- Built an AST visualization tool to inspect parsed program structure.

🔧 Skills

- Programming: C/C++, Python, GLSL, JavaScript, Swift, Objective-C

- GPU, Graphics, and Systems: Vulkan, CUDA, OpenGL, Metal, GPU profiling, shader optimization, render graphs, async compute, CUDA-Vulkan interop

- ML / CV / Runtime Infrastructure: PyTorch, pybind11/nanobind, 3D Gaussian Splatting, neural rendering, OpenCV, model evaluation workflows

- Tools: CMake, Git, LLVM, Flex/Bison, Doxygen, Linux